Discrete Information Sources

1 Exercise 1 DMS entropy ¶ Compute the entropy of the following distribution:

S : ( s 1 s 2 s 3 s 4 s 5 1 2 0 1 8 1 4 1 8 ) \sV{S}{\frac{1}{2}}{0}{\frac{1}{8}}{\frac{1}{4}}{\frac{1}{8}} S : ( s 1 2 1 s 2 0 s 3 8 1 s 4 4 1 s 5 8 1 ) 1.1 Solution ¶ The entropy of a DMS is given by:

H ( S ) = − ∑ i = 1 n p ( s i ) log 2 p ( s i ) H(S) = - \sum_{i=1}^{n} p(s_i) \log_2 p(s_i) H ( S ) = − i = 1 ∑ n p ( s i ) log 2 p ( s i ) In this case, we have:

H ( S ) = − 1 2 log 2 1 2 + 0 log 2 0 + 1 8 log 2 1 8 + 1 4 log 2 1 4 + 1 8 log 2 1 8 = − 1 2 ( − 1 ) − 0 − 1 8 ( − 3 ) − 1 4 ( − 2 ) − 1 8 ( − 3 ) = 1 2 + 3 8 + 1 2 + 3 8 = 1.75 bits \begin{aligned}

H(S) & = - \frac{1}{2} \log_2 \frac{1}{2} + 0 \log_2 0 + \frac{1}{8} \log_2 \frac{1}{8} + \frac{1}{4} \log_2 \frac{1}{4} + \frac{1}{8} \log_2 \frac{1}{8} \\

& = - \frac{1}{2} (-1) - 0 - \frac{1}{8} (-3) - \frac{1}{4} (-2) - \frac{1}{8} (-3) \\

& = \frac{1}{2} + \frac{3}{8} + \frac{1}{2} + \frac{3}{8} \\

& = 1.75 \, \text{bits}

\end{aligned} H ( S ) = − 2 1 log 2 2 1 + 0 log 2 0 + 8 1 log 2 8 1 + 4 1 log 2 4 1 + 8 1 log 2 8 1 = − 2 1 ( − 1 ) − 0 − 8 1 ( − 3 ) − 4 1 ( − 2 ) − 8 1 ( − 3 ) = 2 1 + 8 3 + 2 1 + 8 3 = 1.75 bits Note the situation when a probability is 0, as we have here p ( s 2 ) = 0 p(s_2) = 0 p ( s 2 ) = 0 0 log 2 0 = 0 ⋅ ∞ 0 \log_2 0 = 0 \cdot \infty 0 log 2 0 = 0 ⋅ ∞

The value of this term can be found as a limit, using l’Hôpital’s rule:

lim p → 0 p log 2 p = lim p → 0 log 2 p 1 p = lim p → 0 1 p ln 2 − 1 p 2 = lim p → 0 − p ln 2 = 0 \begin{aligned}

\lim_{p \to 0} p \log_2 p &= \lim_{p \to 0} \frac{\log_2 p}{\frac{1}{p}} \\

&= \lim_{p \to 0} \frac{\frac{1}{p \ln 2}}{-\frac{1}{p^2}} \\

&= \lim_{p \to 0} \frac{-p}{\ln 2} \\

&= 0

\end{aligned} p → 0 lim p log 2 p = p → 0 lim p 1 log 2 p = p → 0 lim − p 2 1 p l n 2 1 = p → 0 lim ln 2 − p = 0 Thus, a probability of zero does not contribute at all to the entropy, and can

be safely ignored.

2 Exercise 2 Optimal questions ¶ Consider the following game: I think of a number between 1 and 8 (each number

has equal chances), and you have to guess it by asking yes/no questions.

Answer the following questions:

a). How much uncertainty does the problem have?

b). How is the best way to ask questions? Why?

c). On average, what is the number of questions required to find the number?

d). What if the questions are not asked in the best way?

2.1 Solution ¶ a). How much uncertainty does the problem have? ¶ The problem can be modeled as a DMS with 8 equiprobable messages:

S : ( s 1 s 2 s 3 s 4 s 5 s 6 s 7 s 8 1 8 1 8 1 8 1 8 1 8 1 8 1 8 1 8 ) \sVIII{S}{\fIoVIII}{\fIoVIII}{\fIoVIII}{\fIoVIII}{\fIoVIII}{\fIoVIII}{\fIoVIII}{\fIoVIII} S : ( s 1 8 1 s 2 8 1 s 3 8 1 s 4 8 1 s 5 8 1 s 6 8 1 s 7 8 1 s 8 8 1 ) The uncertainty of the problem is given by the entropy of this source:

H ( S ) = − ∑ i = 1 8 1 8 log 2 1 8 = 3 bits H(S) = - \sum_{i=1}^{8} \frac{1}{8} \log_2 \frac{1}{8} = 3 \, \text{bits} H ( S ) = − i = 1 ∑ 8 8 1 log 2 8 1 = 3 bits b). How is the best way to ask questions? Why? ¶ The best way to ask questions is to ask questions that split the set of possible numbers in two sets of equal probability.

This is because every answer you obtain to a question behaves like a

binary source, and this provides maximum information when the probabilities are equal:

S : ( Y e s N o 1 2 1 2 ) \snII{S}{Yes}{\frac{1}{2}}{No}{\frac{1}{2}} S : ( Y es 2 1 N o 2 1 ) Thus, the best way to ask questions is to maximize the entropy of the source at each step.

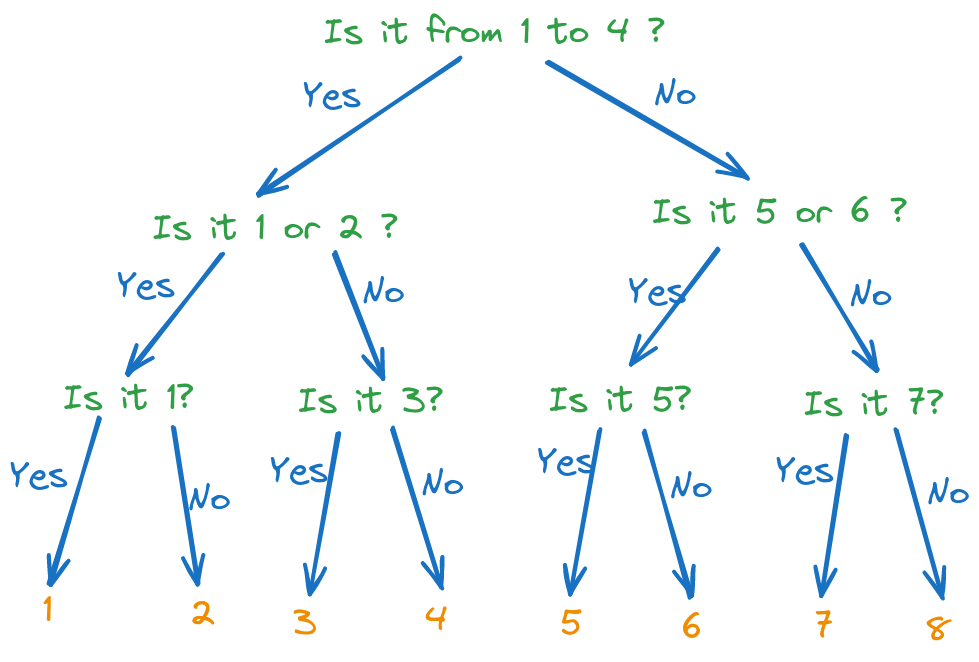

Figure 1: Optimal decision tree for guessing a number between 1 and 8

c). On average, what is the number of questions required to find the number? ¶ With the optimal questions, the number of questions required to find the number is always 3.

This is because the entropy of the source is 3 bits, and the entropy of

an answer is always 1 bit.

d). What if the questions are not asked in the best way? ¶ This is just discussion during lecture.

If questions are not asked in the best way, the Yes/No answers

will not have equal probabilities, and therefore the answers will provide,

on average, less than 1 bit of information.

Therefore the number of questions required to find the number will

be more than three.

3 Exercise 3 Optimal decision tree ¶ What is the optimal decision tree for guessing a number chosen according to the following distribution:

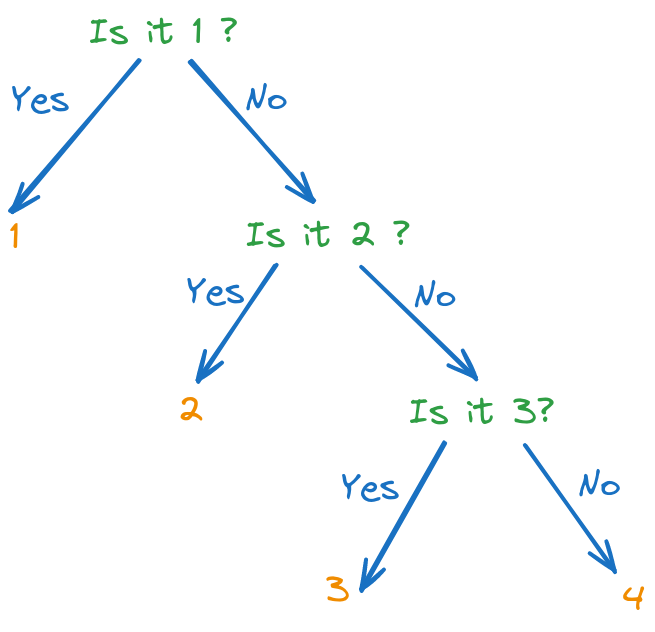

S : ( s 1 s 2 s 3 s 4 1 2 1 4 1 8 1 8 ) \sIV{S}{\fIoII}{\fIoIV}{\fIoVIII}{\fIoVIII} S : ( s 1 2 1 s 2 4 1 s 3 8 1 s 4 8 1 ) 3.1 Solution ¶ The difference with the previous case is that the probabilities are not equal.

But we still use the same principle: we want to maximize the entropy at each step,

which means that we want to split the set of possible numbers in two sets of equal probability. This corresponds to the following optimal decision tree:

Figure 2: Optimal decision tree for guessing a number between 1 and 4

4 Exercise 4 Optimal decision tree ¶ What is the optimal decision tree for guessing a number chosen according to the following distribution:

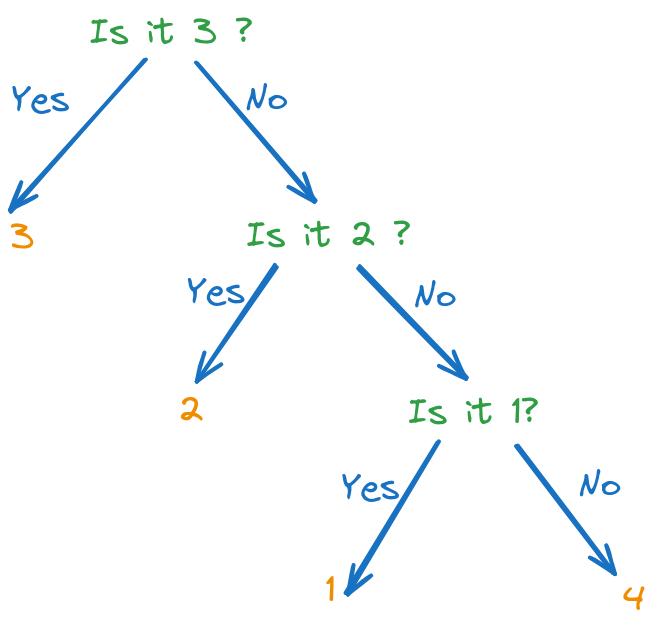

S : ( s 1 s 2 s 3 s 4 0.14 0.29 0.4 0.17 ) \sIV{S}{0.14}{0.29}{0.4}{0.17} S : ( s 1 0.14 s 2 0.29 s 3 0.4 s 4 0.17 ) 4.1 Solution ¶ We still use the same principle as before.

However, we cannot split the set of probabilities into

equal amounts every time. Instead, we try to split them

into sums which are as close to each other as possible ,

even though a perfect split is not always possible.

A good way to ask questions is shown below:

Figure 2: Optimal decision tree for guessing a number between 1 and 4

5 Exercise 5 DMS ¶ A DMS has the following distribution

S : ( s 1 s 2 s 3 s 4 s 5 1 2 0 1 8 1 4 1 8 ) \sV{S}{\frac{1}{2}}{0}{\frac{1}{8}}{\frac{1}{4}}{\frac{1}{8}} S : ( s 1 2 1 s 2 0 s 3 8 1 s 4 4 1 s 5 8 1 ) a). Compute the information of message s 1 s_1 s 1 s 2 s_2 s 2 s 3 s_3 s 3

b). Compute the average information of a message of the source

c). Compute the efficiency, absolute redundancy and relative redundancy of the source

d). Compute the probability of generating the sequence s 1 s 4 s 3 s 1 s_1 s_4 s_3 s_1 s 1 s 4 s 3 s 1

5.1 Solution ¶ The information of a message is given − log 2 p ( s i ) - \log_2 p(s_i) − log 2 p ( s i )

i ( s 1 ) = − log 2 1 2 = 1 bit i ( s 2 ) = − log 2 0 = ∞ bits i ( s 3 ) = − log 2 1 8 = 3 bits \begin{aligned}

i(s_1) & = - \log_2 \frac{1}{2} = 1 \, \text{bit} \\

i(s_2) & = - \log_2 0 = \infty \, \text{bits} \\

i(s_3) & = - \log_2 \frac{1}{8} = 3 \, \text{bits}

\end{aligned} i ( s 1 ) i ( s 2 ) i ( s 3 ) = − log 2 2 1 = 1 bit = − log 2 0 = ∞ bits = − log 2 8 1 = 3 bits The average information of a message is the entropy:

H ( S ) = − ∑ i = 1 5 p ( s i ) log 2 p ( s i ) = 1.75 bits H(S) = - \sum_{i=1}^{5} p(s_i) \log_2 p(s_i) = 1.75 \, \text{bits} H ( S ) = − i = 1 ∑ 5 p ( s i ) log 2 p ( s i ) = 1.75 bits c). Compute the efficiency, absolute redundancy and relative redundancy of the source ¶ For a DMS with 5 messages, the maximum entropy is log 2 5 = 2.32 bits \log_2 5 = 2.32 \, \text{bits} log 2 5 = 2.32 bits

Therefore we have:

η = H ( S ) H m a x = 1.75 2.32 = . . . R = H m a x − H ( S ) = 2.32 − 1.75 = 0.57 bits ρ = H m a x − H ( S ) H m a x = 0.57 2.32 = . . . \begin{aligned}

\eta &= \frac{H(S)}{H_{max}} = \frac{1.75}{2.32} = ... \\

R &= H_{max} - H(S) = 2.32 - 1.75 = 0.57 \text{bits} \\

\rho &= \frac{H_{max} - H(S)}{H_{max}} = \frac{0.57}{2.32} = ...

\end{aligned} η R ρ = H ma x H ( S ) = 2.32 1.75 = ... = H ma x − H ( S ) = 2.32 − 1.75 = 0.57 bits = H ma x H ma x − H ( S ) = 2.32 0.57 = ... d). Compute the probability of generating the sequence s 1 s 4 s 3 s 1 s_1 s_4 s_3 s_1 s 1 s 4 s 3 s 1 ¶ Since the source is memoryless, the messages are independent,

and the probability of generating the sequence is the product of each message probability:

P ( s 1 s 4 s 3 s 1 ) = p ( s 1 ) ⋅ p ( s 4 ) ⋅ p ( s 3 ) ⋅ p ( s 1 ) = 1 2 ⋅ 1 4 ⋅ 1 8 ⋅ 1 2 = 1 128 P(s_1 s_4 s_3 s_1) = p(s_1) \cdot p(s_4) \cdot p(s_3) \cdot p(s_1) = \frac{1}{2} \cdot \frac{1}{4} \cdot \frac{1}{8} \cdot \frac{1}{2} = \frac{1}{128} P ( s 1 s 4 s 3 s 1 ) = p ( s 1 ) ⋅ p ( s 4 ) ⋅ p ( s 3 ) ⋅ p ( s 1 ) = 2 1 ⋅ 4 1 ⋅ 8 1 ⋅ 2 1 = 128 1 6 Exercise 6 Kullback-Leibler distance ¶ Compute the KL distance between the following two probability distributions:

P = [ 0 0 0 1 ] , Q = [ 0.1 0.05 0.05 0.8 ] P = [0 \;\;\; 0 \;\;\; 0 \;\;\; 1], \;\;\;\;\;\;\;\;\; Q = [0.1 \;\;\; 0.05 \;\;\; 0.05 \;\;\; 0.8] P = [ 0 0 0 1 ] , Q = [ 0.1 0.05 0.05 0.8 ] 6.1 Solution ¶ Recall the definition of the KL distance:

D K L ( P , Q ) = ∑ i = 1 n p i log 2 p i q i D_{KL}(P,Q) = \sum_{i=1}^{n} p_i \log_2 \frac{p_i}{q_i} D K L ( P , Q ) = i = 1 ∑ n p i log 2 q i p i In our case, we have:

D K L ( P , Q ) = 0 log 2 0 0.1 + 0 log 2 0 0.05 + 0 log 2 0 0.05 + 1 log 2 1 0.8 = 0 + 0 + 0 + 1 ⋅ 0.3219 = 0.3219 bits \begin{aligned}

D_{KL}(P,Q) & = 0 \log_2 \frac{0}{0.1} + 0 \log_2 \frac{0}{0.05} + 0 \log_2 \frac{0}{0.05} + 1 \log_2 \frac{1}{0.8} \\

& = 0 + 0 + 0 + 1 \cdot 0.3219 \\

& = 0.3219 \, \text{bits}

\end{aligned} D K L ( P , Q ) = 0 log 2 0.1 0 + 0 log 2 0.05 0 + 0 log 2 0.05 0 + 1 log 2 0.8 1 = 0 + 0 + 0 + 1 ⋅ 0.3219 = 0.3219 bits Again, we have used the limit

lim p → 0 p log 2 p q = 0 \lim_{p \to 0} p \log_2 \frac{p}{q} = 0 p → 0 lim p log 2 q p = 0 for the terms where p i = 0 p_i = 0 p i = 0

7 Exercise 7 Source with memory ¶ This is a single exercise with many questions.

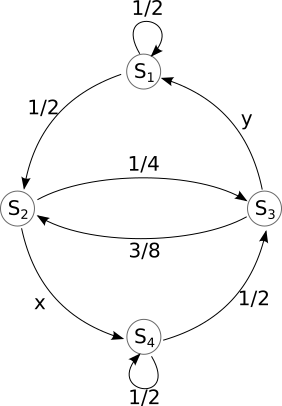

Consider a discrete, complete, information source with memory,

with the graphical representation given below.

Figure 4: Graphical representation of the source

The states are defined as follows:

State

Definition

S 1 S_1 S 1

s 1 s 1 s_1s_1 s 1 s 1

S 2 S_2 S 2

s 1 s 2 s_1s_2 s 1 s 2

S 3 S_3 S 3

s 2 s 1 s_2s_1 s 2 s 1

S 4 S_4 S 4

s 2 s 2 s_2s_2 s 2 s 2

Questions:

a. What are the values of x x x y y y

b. What are the memory order, m m m n n n

c. Write the transition matrix [ T ] [T] [ T ]

d. What is the probability of generating s 1 s_1 s 1 S 3 S_3 S 3

e. If the initial state is S 4 S_4 S 4 s 1 s 2 s 2 s 1 s_1 s_2 s_2 s_1 s 1 s 2 s 2 s 1

f. Compute the entropy in state S 4 S_4 S 4

g. Compute the global entropy of the source;

h. If the source is initially in state S 2 S_2 S 2

7.1 Solution ¶ a. What are the values of x x x y y y ¶ We find the values of x x x y y y

Therefore we have x = 3 4 x = \frac{3}{4} x = 4 3 y = 5 8 y = \frac{5}{8} y = 8 5

b. What are the memory order, m m m n n n ¶ We take a look at the graphical representation of the source and the

definition of the states. From the state definition it is clear

that there are only two messages, s 1 s_1 s 1 s 2 s_2 s 2 n = 2 n = 2 n = 2

The memory order is the number of messages which define a state,

so also 2, m = 2 m = 2 m = 2

c. Write the transition matrix [ T ] [T] [ T ] ¶ Since there are 4 states, [ T ] [T] [ T ] 4 × 4 4 \times 4 4 × 4 p i j p_{ij} p ij S i S_i S i S j S_j S j

[ T ] = [ 1 2 1 2 0 0 0 0 1 4 3 4 5 8 3 8 0 0 0 0 1 2 1 2 ] [T] = \begin{bmatrix}

\frac{1}{2} & \frac{1}{2} & 0 & 0 \\

0 & 0 & \frac{1}{4} & \frac{3}{4} \\

\frac{5}{8} & \frac{3}{8} & 0 & 0 \\

0 & 0 & \frac{1}{2} & \frac{1}{2}

\end{bmatrix} [ T ] = ⎣ ⎡ 2 1 0 8 5 0 2 1 0 8 3 0 0 4 1 0 2 1 0 4 3 0 2 1 ⎦ ⎤ Note how the sum of every row is equal to 1.

d. What is the probability of generating s 1 s_1 s 1 S 3 S_3 S 3 ¶ If the current state is S 3 S_3 S 3 s 2 s_2 s 2 s 1 s_1 s 1 s 1 s_1 s 1 s 2 s_2 s 2 s 1 s_1 s 1 s 1 s 1 s_1s_1 s 1 s 1 S 1 S_1 S 1

Therefore, generating s 1 s_1 s 1 S 3 S_3 S 3 S 3 S_3 S 3 S 1 S_1 S 1 5 8 \frac{5}{8} 8 5

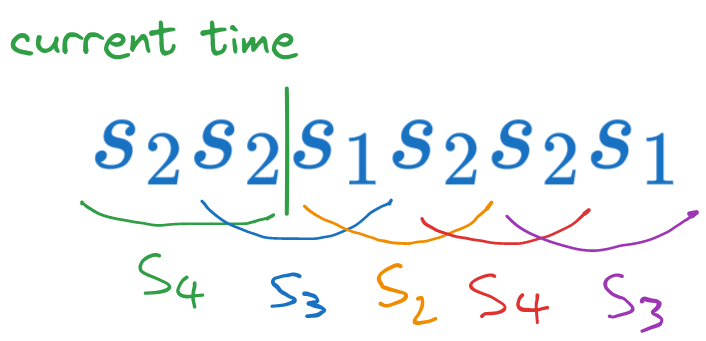

e. If the initial state is S 4 S_4 S 4 s 1 s 2 s 2 s 1 s_1 s_2 s_2 s_1 s 1 s 2 s 2 s 1 ¶ This is an extension of the previous question. We start in state S 4 S_4 S 4 s 1 s_1 s 1 S 3 S_3 S 3 s 2 s_2 s 2 S 2 S_2 S 2 s 2 s_2 s 2 S 4 S_4 S 4 s 1 s_1 s 1 S 3 S_3 S 3 S 4 → S 3 → S 2 → S 4 → S 3 S_4 \rightarrow S_3 \rightarrow S_2 \rightarrow S_4 \rightarrow S_3 S 4 → S 3 → S 2 → S 4 → S 3

Figure 5: Sequence of states when generating s 1 s 2 s 2 s 1 s_1 s_2 s_2 s_1 s 1 s 2 s 2 s 1 S 4 S_4 S 4

Each transition has a probability, found in the transition matrix,

and we multiply them together to obtain the probability of the chain:

P = P ( S 4 → S 3 ) ⋅ P ( S 3 → S 2 ) ⋅ P ( S 2 → S 4 ) ⋅ P ( S 4 → S 3 ) = P ( S 3 ∣ S 4 ) ⋅ P ( S 2 ∣ S 3 ) ⋅ P ( S 4 ∣ S 2 ) ⋅ P ( S 3 ∣ S 4 ) = 1 2 ⋅ 3 8 ⋅ 3 4 ⋅ 1 2 = 9 128 \begin{aligned}

P &= P(S_4 \rightarrow S_3) \cdot P(S_3 \rightarrow S_2) \cdot P(S_2 \rightarrow S_4) \cdot P(S_4 \rightarrow S_3) \\

&= P(S_3|S_4) \cdot P(S_2|S_3) \cdot P(S_4|S_2) \cdot P(S_3|S_4) \\

&= \frac{1}{2} \cdot \frac{3}{8} \cdot \frac{3}{4} \cdot \frac{1}{2} \\

&= \frac{9}{128}

\end{aligned} P = P ( S 4 → S 3 ) ⋅ P ( S 3 → S 2 ) ⋅ P ( S 2 → S 4 ) ⋅ P ( S 4 → S 3 ) = P ( S 3 ∣ S 4 ) ⋅ P ( S 2 ∣ S 3 ) ⋅ P ( S 4 ∣ S 2 ) ⋅ P ( S 3 ∣ S 4 ) = 2 1 ⋅ 8 3 ⋅ 4 3 ⋅ 2 1 = 128 9 f. Compute the entropy in state S 4 S_4 S 4 ¶ In state S 4 S_4 S 4 s 1 s_1 s 1 s 2 s_2 s 2

[ 0 0 1 2 1 2 ] [0 \quad 0 \quad \frac{1}{2} \quad \frac{1}{2}] [ 0 0 2 1 2 1 ] The entropy in state S 4 S_4 S 4

H ( S 4 ) = − 1 2 log 2 1 2 − 1 2 log 2 1 2 = 1 bit H(S_4) = - \frac{1}{2} \log_2 \frac{1}{2} - \frac{1}{2} \log_2 \frac{1}{2} = 1 \, \text{bit} H ( S 4 ) = − 2 1 log 2 2 1 − 2 1 log 2 2 1 = 1 bit g. Compute the global entropy of the source; ¶ First we compute the entropy in every state, then

we compute the stationary probabilities, and then we compute the average.

The entropy in every state is:

H ( S 1 ) = − 1 2 log 2 1 2 − 1 2 log 2 1 2 = 1 bit H ( S 2 ) = − 1 4 log 2 1 4 − 3 4 log 2 3 4 = 0.8113 bits H ( S 3 ) = − 5 8 log 2 5 8 − 3 8 log 2 3 8 = 0.9544 bits H ( S 4 ) = − 1 2 log 2 1 2 − 1 2 log 2 1 2 = 1 bit \begin{aligned}

H(S_1) &= - \frac{1}{2} \log_2 \frac{1}{2} - \frac{1}{2} \log_2 \frac{1}{2} = 1 \, \text{bit} \\

H(S_2) &= - \frac{1}{4} \log_2 \frac{1}{4} - \frac{3}{4} \log_2 \frac{3}{4} = 0.8113 \, \text{bits} \\

H(S_3) &= - \frac{5}{8} \log_2 \frac{5}{8} - \frac{3}{8} \log_2 \frac{3}{8} = 0.9544 \, \text{bits} \\

H(S_4) &= - \frac{1}{2} \log_2 \frac{1}{2} - \frac{1}{2} \log_2 \frac{1}{2} = 1 \, \text{bit}

\end{aligned} H ( S 1 ) H ( S 2 ) H ( S 3 ) H ( S 4 ) = − 2 1 log 2 2 1 − 2 1 log 2 2 1 = 1 bit = − 4 1 log 2 4 1 − 4 3 log 2 4 3 = 0.8113 bits = − 8 5 log 2 8 5 − 8 3 log 2 8 3 = 0.9544 bits = − 2 1 log 2 2 1 − 2 1 log 2 2 1 = 1 bit Next, we compute the stationary probabilities from the system:

[ p 1 , p 2 , . . . p N ] ⋅ [ T ] = [ p 1 , p 2 , . . . p N ] [p_1, p_2, ... p_N] \cdot [T] = [p_1, p_2, ... p_N] [ p 1 , p 2 , ... p N ] ⋅ [ T ] = [ p 1 , p 2 , ... p N ] which, in our case, means the following system of equations,

written either in matrix form or as separate equations:

[ p 1 p 2 p 3 p 4 ] ⋅ [ 1 2 1 2 0 0 0 0 1 4 3 4 5 8 3 8 0 0 0 0 1 2 1 2 ] = [ p 1 p 2 p 3 p 4 ] [p_1 \quad p_2 \quad p_3 \quad p_4] \cdot

\begin{bmatrix}

\frac{1}{2} & \frac{1}{2} & 0 & 0 \\

0 & 0 & \frac{1}{4} & \frac{3}{4} \\

\frac{5}{8} & \frac{3}{8} & 0 & 0 \\

0 & 0 & \frac{1}{2} & \frac{1}{2}

\end{bmatrix}

=

\begin{bmatrix}

p_1 \quad p_2 \quad p_3 \quad p_4

\end{bmatrix} [ p 1 p 2 p 3 p 4 ] ⋅ ⎣ ⎡ 2 1 0 8 5 0 2 1 0 8 3 0 0 4 1 0 2 1 0 4 3 0 2 1 ⎦ ⎤ = [ p 1 p 2 p 3 p 4 ] i.e.:

{ 1 2 p 1 + 5 8 p 3 = p 1 1 2 p 1 + 3 8 p 3 = p 2 1 4 p 2 + 1 2 p 4 = p 3 3 4 p 2 + 1 2 p 4 = p 4 \begin{cases}

\frac{1}{2}p_1 + \frac{5}{8} p_3 &= p_1 \\

\frac{1}{2}p_1 + \frac{3}{8} p_3 &= p_2 \\

\frac{1}{4}p_2 + \frac{1}{2} p_4 &= p_3 \\

\frac{3}{4}p_2 + \frac{1}{2} p_4 &= p_4

\end{cases} ⎩ ⎨ ⎧ 2 1 p 1 + 8 5 p 3 2 1 p 1 + 8 3 p 3 4 1 p 2 + 2 1 p 4 4 3 p 2 + 2 1 p 4 = p 1 = p 2 = p 3 = p 4 However, this is not a linear independent system,

and one equation is dependent on the others.

Therefore, we eliminate one of the equations (any one, which ever looks harder),

and we replace it with p 1 + p 2 + p 3 + p 4 = 1 p_1 + p_2 + p_3 + p_4 = 1 p 1 + p 2 + p 3 + p 4 = 1

Eliminating the second equation in the system results in:

{ 1 2 p 1 + 5 8 p 3 = p 1 1 2 p 1 + 3 8 p 3 = p 2 1 4 p 2 + 1 2 p 4 = p 3 3 4 p 2 + 1 2 p 4 = p 4 p 1 + p 2 + p 3 + p 4 = 1 \begin{cases}

\frac{1}{2}p_1 + \frac{5}{8} p_3 &= p_1 \\

\sout{ \frac{1}{2}p_1 + \frac{3}{8} p_3} &\sout{= p_2} \\

\frac{1}{4}p_2 + \frac{1}{2} p_4 &= p_3 \\

\frac{3}{4}p_2 + \frac{1}{2} p_4 &= p_4 \\

p_1 + p_2 + p_3 + p_4 &= 1

\end{cases} ⎩ ⎨ ⎧ 2 1 p 1 + 8 5 p 3 2 1 p 1 + 8 3 p 3 4 1 p 2 + 2 1 p 4 4 3 p 2 + 2 1 p 4 p 1 + p 2 + p 3 + p 4 = p 1 = p 2 = p 3 = p 4 = 1 Solving this system through any method gives the stationary probabilities:

p 1 = . . . p 2 = . . . p 3 = . . . p 4 = . . . \begin{aligned}

p_1 &= ... \\

p_2 &= ... \\

p_3 &= ... \\

p_4 &= ...

\end{aligned} p 1 p 2 p 3 p 4 = ... = ... = ... = ... Finally, we compute the global entropy of the source as the average

of the state entropies, weighted by the stationary probabilities:

H ( S ) = p 1 H ( S 1 ) + p 2 H ( S 2 ) + p 3 H ( S 3 ) + p 4 H ( S 4 ) = … \begin{aligned}

H(S) &= p_1 H(S_1) + p_2 H(S_2) + p_3 H(S_3) + p_4 H(S_4) \\

&= \dots

\end{aligned} H ( S ) = p 1 H ( S 1 ) + p 2 H ( S 2 ) + p 3 H ( S 3 ) + p 4 H ( S 4 ) = … h. If the source is initially in state S 2 S_2 S 2 ¶ We use the general formula which gives the probabilities at time ( n + 1 ) (n+1) ( n + 1 ) n n n

[ p 1 ( n ) , p 2 ( n ) , . . . , p N ( n ) ] ⋅ [ T ] = [ p 1 ( n + 1 ) , p 2 ( n + 1 ) , . . . , p N ( n + 1 ) ] [p_1^{(n)}, p_2^{(n)}, ... , p_N^{(n)}] \cdot [T] = [p_1^{(n+1)}, p_2^{(n+1)}, ... , p_N^{(n+1)}] [ p 1 ( n ) , p 2 ( n ) , ... , p N ( n ) ] ⋅ [ T ] = [ p 1 ( n + 1 ) , p 2 ( n + 1 ) , ... , p N ( n + 1 ) ] In our case, the initial probabilities are [ 0 , 1 , 0 , 0 ] [0, 1, 0, 0] [ 0 , 1 , 0 , 0 ] S 2 S_2 S 2 [ T ] [T] [ T ]

[ 0 1 0 0 ] ⋅ [ T ] ⋅ [ T ] = [ p 1 ( 2 ) , p 2 ( 2 ) , p 3 ( 2 ) , p 4 ( 2 ) ] \begin{bmatrix}

0 & 1 & 0 & 0

\end{bmatrix}

\cdot [T] \cdot [T]= [p_1^{(2)}, p_2^{(2)}, p_3^{(2)}, p_4^{(2)}] [ 0 1 0 0 ] ⋅ [ T ] ⋅ [ T ] = [ p 1 ( 2 ) , p 2 ( 2 ) , p 3 ( 2 ) , p 4 ( 2 ) ] Therefore we have:

[ p 1 ( 2 ) , p 2 ( 2 ) , p 3 ( 2 ) , p 4 ( 2 ) ] = [ 0 1 0 0 ] [ 1 2 1 2 0 0 0 0 1 4 3 4 5 8 3 8 0 0 0 0 1 2 1 2 ] [ 1 2 1 2 0 0 0 0 1 4 3 4 5 8 3 8 0 0 0 0 1 2 1 2 ] = [ 0 0 1 4 3 4 ] [ 1 2 1 2 0 0 0 0 1 4 3 4 5 8 3 8 0 0 0 0 1 2 1 2 ] = [ 5 32 3 32 3 8 3 8 ] \begin{aligned}

[p_1^{(2)}, p_2^{(2)}, p_3^{(2)}, p_4^{(2)}] &=

\begin{bmatrix}

0 & 1 & 0 & 0

\end{bmatrix}

\begin{bmatrix}

\frac{1}{2} & \frac{1}{2} & 0 & 0 \\

0 & 0 & \frac{1}{4} & \frac{3}{4} \\

\frac{5}{8} & \frac{3}{8} & 0 & 0 \\

0 & 0 & \frac{1}{2} & \frac{1}{2}

\end{bmatrix}

\begin{bmatrix}

\frac{1}{2} & \frac{1}{2} & 0 & 0 \\

0 & 0 & \frac{1}{4} & \frac{3}{4} \\

\frac{5}{8} & \frac{3}{8} & 0 & 0 \\

0 & 0 & \frac{1}{2} & \frac{1}{2}

\end{bmatrix}\\

&=

\begin{bmatrix}

0 & 0 & \frac{1}{4} & \frac{3}{4}

\end{bmatrix}

\begin{bmatrix}

\frac{1}{2} & \frac{1}{2} & 0 & 0 \\

0 & 0 & \frac{1}{4} & \frac{3}{4} \\

\frac{5}{8} & \frac{3}{8} & 0 & 0 \\

0 & 0 & \frac{1}{2} & \frac{1}{2}

\end{bmatrix}\\

&=

\begin{bmatrix}

\frac{5}{32} & \frac{3}{32} & \frac{3}{8} & \frac{3}{8}

\end{bmatrix}

\end{aligned} [ p 1 ( 2 ) , p 2 ( 2 ) , p 3 ( 2 ) , p 4 ( 2 ) ] = [ 0 1 0 0 ] ⎣ ⎡ 2 1 0 8 5 0 2 1 0 8 3 0 0 4 1 0 2 1 0 4 3 0 2 1 ⎦ ⎤ ⎣ ⎡ 2 1 0 8 5 0 2 1 0 8 3 0 0 4 1 0 2 1 0 4 3 0 2 1 ⎦ ⎤ = [ 0 0 4 1 4 3 ] ⎣ ⎡ 2 1 0 8 5 0 2 1 0 8 3 0 0 4 1 0 2 1 0 4 3 0 2 1 ⎦ ⎤ = [ 32 5 32 3 8 3 8 3 ] The most likely state in which the source will be after 2 messages

is S 3 S_3 S 3 S 4 S_4 S 4 S 2 S_2 S 2