1Introduction¶

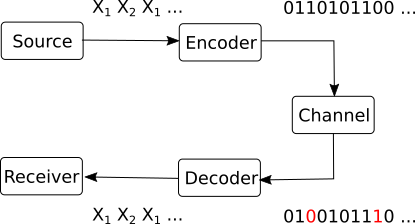

Remember the block scheme of a communication system:

Figure 1:Communication system

Error control coding is the second task of coding in a communication system, and refers to the protection of information against channel errors.

During any transmission, the data (symbols, bits) go through a transmission channel, The transmission channel is not ideal, and in particular it introduces errors. For example, some bit values may be changed due to electrical noise: a bit is sent as 0, but received as 1, or viceversa. This is a problem in all communication systems, and to some degree it is unavoidable, in general, because electrical noise is unavoidable.

However, we usually require that all data is received correctly. For example, when you download a file from the internet, you want to be sure that the file is exactly the same as the original. For example, a single erroneous bit can make a zip file unreadable.

Error control coding refers to all the algorithms which have been developed with th purpose of protecting against errors in the data.

1.1Error model¶

We first need to clarify the assumptions and the scope of the current discussion.

First, we consider only binary codes and binary channels, i.e. all the data is represented using binary symbols .

An error is defined as bit that has changed from 0 to 1 or from 1 to 0 while going through channel. In these lectures we consider only these types of errors, and not, for example, situations where bits are lost.

Errors can appear in different ways:

independent errors are sporadic, independent of all the others. We consider each bit as having a random chance of undergoing an error, without any relation to the surrounding bits.

For example, if , its means that each bit has a chance of 1 in a million of being changed. This means an average of 1 error in a million bits. A file of 100 MB will have around 800 bit errors (100 MB = 100 megabytes = 800 million bits).

burst or packets of errors are groups of consecutive errors. This can happen in some channels, for example in wireless transmissions, where a sudden millisecond drop in in signal quality can affect a whole sequence of bits.

Packets of errors are much harder to correct than independent errors, because the whole context in that region of the bitstream is lost.

When considering that a bit changes its value due to an error, it is very helpful to treat this as a mathematical operation. This will allow us to use mathematical tools to design error control mechanisms.

We introduce therefore the modulo-2 sum operation, denoted , with the following properties:

It operates only on individual bits (0 or 1)

It is defined by the following table:

This means:

It is also known as the exclusive OR operation (XOR).

Besides the module-2 sum, we can also have the normal product operation between bits (i.e. , ). Together, they form a modulo-2 arithmetic system known as Galois field GF(2), which supports operations with bits in a way similar to normal arithmetic:

We can sum bits (with modulo-2 sum)

We can multiply bits (with normal product)

We can subtract bits, also with modulo-2 sum. Subtraction is the same as addition, since in modulo-2 arithmetic. There are no negative numbers, each number is its own negative, since .

We can use parantheses as usual, i.e.:

We can define equation systems, with all coefficients and variables being bits

We can define polynomials which have bits as coefficients, e.g.

When a bit changes its value, we may think that it undergoes modulo-2 sum with 1. Given a bit and its complement , we have:

irrespective of the value of (0 or 1)

When a bit remains the same, we may think that it undergoes modulo-2 sum with 0, since:

for any bit .

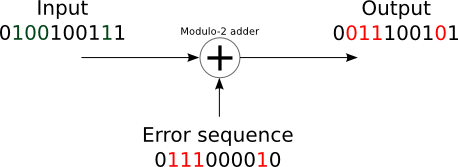

We can extend this to a sequence of bits, by performing the modulo-2 sum bit by bit. Suppose we transmit a bit sequence :

which is received, with errors on the 2nd, 3rd, 4th and 9th positions, as the sequence :

we can consider as being obtained by the modulo-2 sum of with an error sequence :

The error sequence has values of 1 wherever there is an error, and 0 otherwise:

Figure 2:Channel error model

Therefore, we model the effect of the channel as this modulo-2 sum with the error sequence. This constitutes our model for the transmission channel, for the purpose of error control coding.

1.2Error detection vs error correction¶

What can we do about errors? In general there are two approaches

Error detection means finding out if there is any error in the received sequence, but not exactly which bits have the errors.

Since we don’t know exactly where are the errors, we cannot correct the bits. Instead, the whole sequence is discarded, and the sender is asked to retransmit it.

This is possible in live communication systems, where the sender can retransmit the data, as for example in the TCP/IP protocol used on the internet.

Error correction means finding out if there is any error in the received sequence and find out exactly which bits have errors. Once we locate the errors, we can correct them, by inverting the bits, and obtain the original data back.

This is especially useful when we cannot retransmit the data, for example when the data is stored on a physical medium (e.g. on a disk).

Error detections is easier to achieve than error correction, because it doesn’t require to know exactly where the errors are. However, there exist situations where error correction is necessary,

1.3Definitions and overview of error control coding¶

We first give a general schematic of the error control process, with the purpose of fixing general definitions and notation. The process is tailored to block codes, which are the most common type of error control codes.

The sender needs to send a sequence of bits, which is the information word:

For each possible information word , the coder assigns a codeword of length :

The codeword is sent on the channel instead of the original information word

The receiver receives a sequence , known ad the received word, with possible errors:

The decoding algorithm detects and/or corrects thformse errors.

If it performs only detection, it reports whether there are errors or not in

If it performs correction, it tries to find the correct codeword and information word from .

Both detection and correction may fail, if the errors are too numerous. This is why we denote the output of the decoding algorithm as and , to indicate that they are not necessarily the same as the original and .

We provide below a list of definitions.

An error correcting code is an association between the set of all possible information words to a set of codewords.

It can be represented as a table in which each possible information word has a certain codeword .

The association can be done in many ways:

randomly: codewords are selected and associated randomly to the information words

based on a certain rule: the codeword is computed with some algorithm from the information word

A code is a block code if it operates with words of fixed size. Otherwise it is a non-block code

For a block code, the size of information word is typically denoted as , and the size of codeword by , with .

A code is linear if any linear combination of codewords is also a codeword.

A code is called systematic if the codeword contains all the information bits explicitly, unaltered, usually at the beginning or at the end of the codeword.

This means that the coding process merely adds supplementary bits besides the information bits, and the codeword has two parts: the information bits and the parity bits.

Example: adding a parity bit after the information bits

Otherwise the code is called non-systematic. In this case, the information bits are not explicitly visible in the codeword.

The coding rate of a block-code is:

A code is an -error-detecting code if it is able to detect or less errors

A code is an -error-correcting code if it is able to correct or less errors

Further examples: at blackboard

1.4Naive examples¶

A first example: parity bit¶

One of the simplest ways to do error control coding is to add a parity bit to the information word.

The parity bit is defined as the module-2 sum of all the bits in the information word, i.e. it is 0 if there are an even number of 1’s in the information word, and 1 if there are an odd number of 1’s.

The codeword is obtained by appending the parity bit at the end of the information word.

Consider an information word of length bits, to which we append a parity bit, resulting in a codeword of length bits.

The receiver checks if the parity bit is still equal to the modulo-2 sum of the information bits.

What can we say about this coding procedure:

It can detect 1 error in a 9-bit codeword, because if there is 1 error in the codeword, the parity bit will not anymore match the information bits, and the receiver will understand that an error must have happened.

It is not able to detect 2 errors because if there are 2 errors in the codeword, the parity bit will still match the information bits, and the receiver will not know that there are errors.

Therefore this procedure is a 1-error-detecting

Even for 1 error, The receiver cannot correct the error, because it doesn’t know where the error is located. Therefore this is a 0-error-correcting code.

The coding rate is

The coding algorithm could be improved by adding more parity bits, (see example at blackboard)

A second example: repetition code¶

Let’s discuss another basic way of doing error control coding, the repetition code.

The codeword is created by repeating the information word times in a row. As an example, for , to transmit we actually send on the channel

The receiver uses a majority rule for decoding: knowing that each bit is repeated times on certain locations, it decides that the correct value of each bit is the one that appears most often among those locations.

For example, if it receives , the decoder views the sequence as 5 groups of 3 bits, and takes the majority value in each column:

Let’s analyze this code:

It can detect up to 4 errors, because for 4 or fewer errors the decoder will understand some errors must be in the codeword, since the 5 groups are not all the same.

It cannot detect 5 errors everytime, because if the 5 errors affect the same column, all the resulting bits will be the same, and the decoder is fooled into believing everything is correct.

Therefore the code is 4-error-detecting (in general, -error-detecting).

It can correct up to 2 errors, because even if 2 errors affect the same column, the correct value will still appear more often than the wrong value, and the majority rule will identify the errors correctly.

It is not able to correct 3 errors everytime, because if 3 errors affect the same column, the wrong value will appear more often than the correct value, and the majority rule will produce the wrong result.

Therefore the code is 2-error-correcting (in general, -error-correcting)$.

The coding rate

If the number of repetitions is increased, the code becomes more robust to errors, but the coding rate decreases, i.e. more redundancy is added.

Redundancy¶

We can see in these examples that error control coding works by adding redundancy to the data, i.e. adding additional bits, artificially created according to some rule.

The decoding algorithm will then check if the rule still holds:

if yes, it will decide that there are no errors

if not, it will decide that there are errors

Note that error control coding adds redundancy to the data, while source coding aims to reduce redundancy. Is there a contradiction?

Well, no, because the two types of redundancy are different. Source coding reduces existing redundancy from the data, which served no purpose. Error control coding adds redundancy in a controlled way, with a purpose. The purpose is for the decoder to be able to check if the data is correct or not.

In practice, source coding and error control coding are done sequentially, in an independent manner.

First perform source coding, eliminating redundancy in representation of data

Then perform error control coding, adding redundancy for protection

1.5Coding rate and channel capacity¶

This is a preview of something which will be discussed in the next chapters, but relates to the coding rate of a code, so we can introduce it here.

In Chapter IV we will study transmission channels, which are mathematical model of how information is handled from the sender to the receiver. In that chapter we will find that each channel has a certain capacity value.

The channel capacity is the maximum amount of information than can be sent over the channel with one symbol, on average.

For example, a binary channel (working with bits 0 and 1) may have capacity bits, i.e. for each physical bit delivered, the mathematical information actually transmitted is 0.8.

The channel capacity is related to the coding rate of error control codes via Shannon’s noisy channel coding theorem.

The rigorous proof of the theorem is too complex to present here. Some key ideas of the proof are:

Use very long information words,

Use random codes, and compute the probability of having error after decoding

If , in average for all possible codes, the probability of error after decoding goes to 0. This means that there exists at least one code better than the average, therefore this code can correct all errors, and it is the code which we should use. Thus, the coding rate is achievable.

In layman terms, the theorem says that:

For all coding rates , there is a way to recover the transmitted data perfectly (decoding algorithm will detect and correct all errors)

For all coding rates , there is no way to recover the transmitted data perfectly

As a numerical example, suppose we send bits on a binary channel with capacity 0.7 bits/message.

For any coding rate the theorem states that there exists an error correction code that allows fixing of all errors. Coding rate means we send more than 10 bits for every 7 information bits, on average.

With less than 10 bits for every 7 information bits, i.e. , no code exists that can fix all errors.

The theorem makes it clear when it is possible to fix all errors, giving a clear limit to the coding rate required for this to happen. Moreover, it guarantees that such a code exists, but it doesn’t tell us how to find it. It gives no clue of how to actually find the code in practice, only some general principles derived from the proof:

using longer information words is better

random codewords are generally good (though in practice they are not efficient to use, because thet don’t have a simple implementation)

In practice, we cannot use infinitely long codewords, and random codewords are no efficient to implement, so we will only get a good enough code.

2Analyzing linear block codes with the Hamming distance¶

2.1The Hamming distance¶

We start from a practical idea which we can deduce from what we discussed until now.

If a codeword has errors and, as a consequence, it becomes identical to another codeword , any decoder will be fooled into thinking everything is correct, and it has received a correct codeword . Thus the errors go undetected.

Therefore, we want the codewords to be as different as possible from each other. The Hamming distance is a way of computing how different two codewords are.

The Hamming distance of two binary sequences a and b having the same length , , is the total number of bit differences between them

The Hamming distance shows how many bit changes are needed to convert one sequence into the other one.

The Hamming distance satisfies the 3 properties of a metric function:

, with

The minimum Hamming distance of a code, denoted as , is the minimum Hamming distance between any two codewords and . We find it by taking all the pairs of codewords, computing the Hamming distance between them, and taking the minimum value.

The minimum Hamming distance of a code guarantees that all codewords are at least bits apart from each other.

2.2Nearest-neighbor decoding scheme¶

We introduce a simple decoding scheme, called nearest-neighbor decoding, which serves as the underlying approach for many error control codes.

Coding

We use a code with large , to make the codewords as different as possible. The code (i.e. all the possible codewords) is known to both the sender and the receiver.

One of the codewords is sent.

Decoding

Receive a word , that may have errors.

Error detecting:

check if is among the codewords of the code

if is among the codewords, decide that there have been no errors

if is not among the list of codewords, decide that there have been errors

Error correcting:

if is among the codewords, decide there are no errors

else, choose the codeword from which is nearest to the received , in terms of Hamming distance. Decide that the correct codeword is this one. This is known as nearest-neighbor decoding

The performance of this scheme, in terms of how many errors it can detect and correct, is related to the minimum Hamming distance of the code. As the next theorem shows, a larger means better performance. Note, however, that increasing the usually means longer codewords, i.e. smaller coding rate, i.e. more redundancy, so there is a price to pay for better performance.

The theorem gives the maximum number of errors for which the decoding scheme is guaranteed to work. If the number of errors is higher than these limits, the decoding scheme may or may not work, depending on the specific errors. We may have:

Detection failure: decide that there were no errors, even if they were (more than )

Correction failure: choose a wrong codeword as original

2.3Computational complexity¶

The nearest-neighbor decoding scheme is simple to understand and implement, and it underpins many error control codes (if not all). However, it in not always used in practice, because of a fundamental problem: it requires a lot of computational resources, especially for large codes (e.g. in practice).

To understand this, we need to use the concept of computational complexity of an algorithm, coming from computer science.

The computational complexity of an algorithm is the amount of computational resources required by the algorithm, expressed as a function of the size of the input data. In general, we are interested in the order of magnitude of the computational complexity, i.e. the dominant term in the expression, neglecting the other terms and the coefficients.

The computational complexity of an algorithm is expressed using the big-O notation, for example , which means that the computational complexity is proportional to , or , which means that the computational complexity is proportional to . This gives an idea about how the computational complexity grows with the size of the input data, .

For the nearest-neighbor decoding scheme, if the number of information bits is , the computational complexity is , which is absolutely huge for large .

This is because both error detection and correction require comparing the received word with all the possible codewords, and there are codewords in total, one for each possible information word. Thus, the algorithm needs to go through an array of size to find the correct codeword.

A computation complexity of means that the algorithm is very inefficient, and is not feasible in practice:

when doubles, the amount of computations is squared

when increases 10 times, the amount of computations is raised to a power of 10

when increases 100 times, the amount of computations is raised to a power of 1000

for bits, you’d need more than all the energy of the Sun (not a joke, it can be calculated) to go through a list of size 2256 codewords.

Therefore, we need ways to make decoding simpler and more efficient.

3Analyzing linear block codes with matrix algebra¶

3.1Vector algebra in a binary world¶

A short review of basic vector algebra¶

A vector space is a set with two operations, sum and multiplication by a constant, having the following properties:

an element + another element = still an element from the set

an element a constant = still an element from the set

In other words, we cannot escape from the set by summing or multiplying by a constant.

The elements of a vector space are called “vectors”.

Intuitive examples of vector spaces are Euclidian (geometrical) vector spaces: a line, points in 2D, 3D. For example, adding two vectors in a 2D plane gives another vector in the same 2D plane, and multiplying a vector by a constant gives another vector in the same 2D plane.

A basis of a vector space is a set of independent vectors , such that any vector can be expressed as a linear combination of the basis elements

A subspace is a smaller dimensional vector space embedded inside a larger vector space.

For example, a line in a 2D plane is a subspace of the 2D plane:

sum of two vectors on the line is still on the same line

the size of the line subspace is 1, and the embedding plane has size 2

Another example is a 2D plane in 3D space:

sum of two vectors from the plane is still on the plane

size of subspace is 2, size of the larger space is 3

How to look at matrix-vector multiplications¶

When we multiply a matrix with a vector (in this order), the output is a column vector which is a linear combination of the columns of the matrix. The multiplicating vector gives the coefficients of the linear combination.

When we multiply a vector with a matrix (in this order), the output is a row vector which is a linear combination of the rows of the matrix. The multiplicating vector gives the coefficients of the linear combination.

TODO: Explain with draw picture

Vector spaces can be perfectly described with matrix-vector multiplications

Matrix columns/rows = elements of the basis

The output vector = the vector

The multiplicated vector = the coefficients of the linear combination

Any vector can be expressed as a linear combination of the basis elements:

where are column vectors.

The equation can be transposed, which would mean that all vectors become row vectors, but the usual mathematical convention is to use column vectors wherever possible.

Vector spaces of binary codewords¶

Furthermore the codewords of a linear block code with code length and information length is a vector subspace of the larger vector space of all binary sequences of size .

You can imagine the set of codewords as forming a 2D plane embedded in a 3D space. All codewords live in this plane, and all point on this plane are codewords. The larger space contains lots of other points which are not on the plane, so are not codewords, just like there are many sequences of size which are not codewords.

Since all codewords of a linear block code form a subspace, it follows that all codewords can be expressed as matrix-vector multiplications.

3.2The generator matrix¶

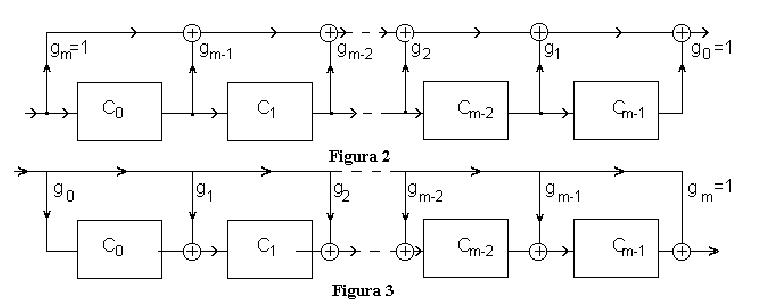

All codewords for a linear block code can be generated via a vector-matrix multiplication as follows:

Figure 3:Codeword construction with generator matrix

The matrix is known as the generator matrix of the code. Its size of size , and it is a “wide” matrix since we have . The generator matrix fully defines the code, i.e. if we know the generator matrix, we know everything about the code.

The vector is the information word, of size .

Such a vector-matrix multiplication can be interpreted as follows: the resulting codeword is a linear combination of the rows in the matrix , with the coefficients given by the information word . This means the rows of are a basis for the linear block code. All the vector space theory we discussed before applies here:

the rows of the generator matrix are a basis for the codewords

all codewords are obtained by linear combinations of the rows of the generator matrix, i.e. by multiplcation with

the information word gives the coefficients of the linear combination

for each information word , there is a corresponding codeword obtained by multiplying with

Note that the equation could also be transposed, i.e. use column vectors instead of row vectors, but it is more customary to use row vectors here.

This way of generating codewords guarantees that the code is linear. Indeed, we can show that the sum of two codewords is also a codeword, by construction. If we have two codewords and , corresponding to two information words and , then the sum of the two codewords is still something multiplied with , so it is still a codeword.

3.3The control (parity-check) matrix¶

Every generator matrix has a related control matrix (also known as parity-check matrix) such that

The size of is .

The two matrices and are related, and one can always be deduced from the other.

The control matrix is very useful to check if a binary word is a codeword or not (i.e. for nearest neighbor error detection), based on the following theorem.

The theorem shows that all codewords generated with will produce 0 when multiplied with . On the other hand, all binary sequences that are not codewords will produce something different from 0 when multiplied with . In this way, multiplcation with can be used as a quick way to check if a binary sequence is a codeword or not. This is what error detection is all about.

The generator matrix and are related. In linear algebra terminology, is the matrix which generates the -dimensional subspace of the codewords, and the rows of are the “missing” dimensions of the subspace (the “orthogonal complement”)

In terms of matrix shape, and together form a full square matrix :

size of is

size of is

3.4Matrices [G] and [H] for systematic codes¶

For systematic codes, and have special forms (known as “standard” forms)

The generator matrix can be decomposed into two parts:

First part is the identity matrix. This copies the information bits in the resulting codeword.

Second part is some matrix , which generates the control bits

The control matrix is related to as follows:

First part is (the same as in , but transposed)

Second part is an identity matrix of corresponding size

Thus, one matrix can be derived from the other.

3.5Interpretation as parity bits¶

Multiplication with in standard form produces the codeword composed of two parts:

the information bits themselves (since the first part of is an identity matrix)

some additional control bits, which are linear combinations of the information bits, i.e. parity bits

Each column of corresponds to one parity bit in the codeword, and the values in the column define which bits are combined to create the parity bit.

The control matrix in standard form checks if parity bits correspond to information bits

Proof: write down the parity check equation (see example)

\smallskip

If all parity bits match the data, the result of multiplying with is 0. Otherwise it is , signaling that at least one parity bit does not check out.

Therefore, the generator & control matrices are just mathematical tools for easy computation and checking of parity bits. We’re still just computing and checking parity bits, but we do it easier with matrices.

3.6Syndrome-based error detection and correction¶

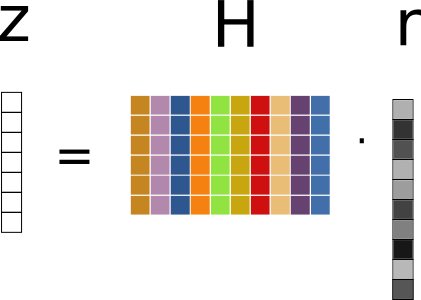

When considering error detection and correction, we multiply the received word with the control matrix .

The vector is called the syndrome of the received word .

The syndrome is a linear combination of the columns of , according to the column-wise interpretation of the multiplication.

{width=30%}

{width=30%}

The process of error detection with matrices is therefore as follows:

generate codewords with generator matrix:

send codeword on the channel

a random error word is applied on the channel

receive word

compute syndrome of :

Decide:

If => has no errors

If => has errors

For error correction, we need to locate the errors. For this, observe that the syndrome is the effect only of the error word , and not of the codeword (considering that is the sum of and ):

We identify the error word by searching exhaustively through all possible error words , until we find the first one that produces the same syndrome . Once we find it, we know where the errors are, and we can correct them.

The process is summarized as follows:

Create a syndrome table:

for every possible error word , compute the syndrome

start with error words with 1 error (most likely), then with 2 errors (less likely), and so on

Locate the syndrome in the table, read the corresponding error word

Find the correct word:

adding the error word again will invert the errored bits back to the originals

Example: at blackboard

3.7Computational complexity¶

When using matrices, the computational complexity is much lower than the scheme based on codeword tables.

Error detection requires a multiplication with , so the complexity is (size of is ).

Error correction still needs to check all possible error words, which means bad performance, but at least we don’t need to check all codewords. In practice, other tricks are used to make it much faster (see Hamming codes for example)

3.8Conditions on [H] for error detection and correction¶

The performance of the error detection and correction scheme, i.e. how many errors can be detected and corrected, can be deduced from the properties of the control matrix .

For error detection, we can detect errors if the syndrome is non-zero. Whenever an error word , when multiplied with , produces a zero syndrome, the algorithm is fooled into thinking there are no errors. Therefore, we need to make sure that the syndrome is non-zero. Considering that the syndrome is a linear combination of the columns of , with only the columns corresponding to values of 1 in the error word , we can say:

To detect a single error, every column of must be non-zero, such that the syndrome is non-zero whenever the error word has a single 1, no matter where it is located.

To detect two error, sum of any two columns of cannot be zero, i.e. all columns are different.

In general, to detect errors, we need that the sum of any or less columns of is non-zero.

For error correction, we use the syndrome table to identify the error word. When two different error words produce the same syndrome, the algorithm is unable to identify which is the true one. Therefore, error correction works only if the syndrome is unique:

To correct a single error, all columns of must be different, such that the syndrome is unique for all error words with a single 1.

To correct two errors, the sum of any two columns of must be different, such that the syndrome is unique for all error words with two 1s. We also

To correct errors: sum of any or less columns of are all different * much more difficult to obtain than for decoding

Conditions for error correction are more demanding than for detection

Note: Rearranging the columns of (the order of bits in the codeword) does not affect performance

4Hamming codes¶

4.1Structure of a Hamming codeword¶

From the structure of , we observe that Hamming codes are almost-systematic: they are systematic up to a rearrangement of bits.

is not systematic according to our previous definition, but we can make it systematic by simply rearranging the columns in a different order. All the columns of the identity matrix are among the columns of , just not in the correct locations as to form the identity matrix at the beginning or at the end. However, we could obtain a systematic code by a simple rearranging of the columns in , which corresponds to rearranging the bits in the codeword, which is a fairly benign operation.

For Hamming codes we use the notation (n,k) Hamming code, where:

n = codeword length = ,

k = number of information bits =

Examples:

Hamming code: bits in a codeword, 4 information bits, 3 control bits

Hamming code: bits in a codeword, 11 information bits, 4 control bits

Hamming code: bits in a codeword, 26 information bits, 5 control bits

Hamming code: bits in a codeword, 120 information bits, 7 control bits

Just like for any linear block code, a Hamming codeword has length equal to the width of (), and consists of information bits and control bits (also known as “parity bits”).

in the codeword, bits are control bits, and they correspond to the locations of the columns of that are identical to the columns of an identity matrix (having only a single 1 in each column)

the remaining bits are information bits

For example, the codeword of the Hamming(7,4) code, with the matrix above, consists of three control bits () and four information bits (), with the subscript indicating the position:

Similarly, the codeword for Hamming(15,11) has the following structure:

4.2Construction of Hamming codewords¶

Every Hamming code has a generator matrix , just like any other linear block code, but we don’t provide it explicitly, because it is hard to remember. (we could deduce it by rearranging the columns of to make it systematic first)

Instead, we can deduce the values from the equation system of the parity-check matrix , We know that every codeword satisfies the relation:

Writing this as an equation system we obtain, for example for Hamming(7,4):

Observe that in every equation there is a single control bit appearing, which means that it must be equal to the sum of the information bits in that equation:

We can apply the same process for other Hamming codes.

4.3Error detection and correction with Hamming codes¶

As for any linear block code, by analyzing the columns of we can deduce how many errors can be detected or corrected.

The detection and correction process is the same as with all linear block codes. Having received a word , we compute the syndrome:

If is non-zero, then there are errors. If there is a single error, is equal to the column of corresponding to the error position, which is exactly the position of the error, written in binary. Therefore, keep in mind:

For error correction with Hamming codes, the position of the error is given by reading the binary value of the syndrome.

It is important to note that the code cannot do both detection and correction simultaneously: when we obtain a non-zero syndrome, we cannot tell if it is a single error or two errors, so there is no way to know if we should attempt to fix the error (if it’s a single error) or just detect (if they are two errors). This must be decided beforehand, at the design stage.

For example, the syndrome in the previous example could also have come from two errors located on positions 1 and 5, and in this case fixing the error would have been incorrect. The system designer must decide at the design stage how to handle such cases:

either the code is used for detection only, and it never attempts to correct errors

or the code is used for correction only, and it assumes there is at most one error, and always attempts to correct it

The coding rate of a Hamming code is given by:

Since Hamming codes detect 2 and fix 1 error in codeword, longer Hamming codes are progressively weaker:

they have weaker error correction capability

better efficiency (higher coding rate )

more appropriate for transmissions with error probabilities

The choice of which code to use is ultimately based on the expected bit error probabilities during the transmission.

4.4Encoding & decoding example for Hamming(7,4)¶

See whiteboard.

In this example, encoding is done without the generator matrix , directly with the matrix , by finding the values of the parity bits , , such that

For a single error, the syndrome is the binary representation of the location of the error.

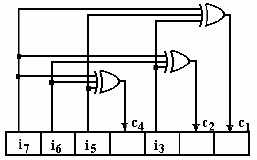

4.5Hardware circuits for Hamming(7,4)¶

Due to their simplicity, Hamming coding and decoding can easily be done with logic gates.

The Hamming encoder consists of:

A shift register to hold the codeword

Logic OR gates to compute the parity bits

The four information bits are loaded in the shift register, and the logic gates automatically compute the parity bits.

Hamming encoder circuit with logic gates

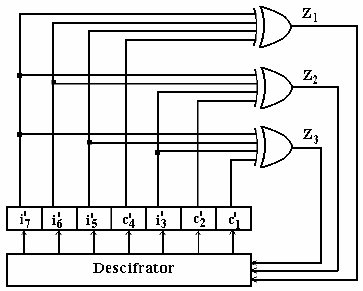

The Hamming decoder consists of:

A shift register to hold the received word

Logic OR gates to compute the bits of the syndrome ()

A binary decoder ("descifrator): is a base-2 to base-10 converter, activates the output corresponding to the binary input value, in this way fixing the error at the correct position

Hamming decoder circuit with logic gates

4.6SECDED Hamming codes¶

A normal Hamming code can correct 1 error or it can detect 2 errors, but we cannot differentiate between the two cases at runtime.

A solution to this is to add an additional parity bit, which helps identify the correct situation.

For example, the (8,4) SECDED Hamming code is based in the normal (7,4) Hamming code, with the additional bit in front:

where is defined as:

The parity check matrix of a SECDED Hamming code, , is based on the matrix of the normal code, extended by one row and one column:

4.7Encoding and decoding of SECDED Hamming codes¶

The encoding of SECDED Hamming codes can be done in two ways:

Compute the codeword directly using , as we did for normal Hamming codes,

or

Alternatively, compute the normal Hamming code first, and then prepend the additional bit = sum of all other bits

Decoding of SECDED Hamming codes makes use of the additional parity bit to decide whether there is a single error, which can be fixed, or there are two errors, when no fix is attempted.

The decoding process is as follows:

Compute the syndrome of the received word using :

The syndrome has one additional bit in front, , which corresponds to the extra line added on top of .

The new bit tells us whether the received matches the parity of the received word

if : the additional parity bit matches the parity of the received word, so there is an even number of errors (0 or 2)

: the additional parity bit does not match the parity of the received word, so there is an odd number of errors (1)

Decide which of the following cases happened:

If no error happened:

If 1 error happened: (does not match), the remaining part is non-zero.

In this case there is 1 error on position given by , and we can fix it.

If 2 errors happened: (does match, because the two errors cancel each other out), the remaining part is non-zero.

In this case we can just report that errors are detected, but we cannot fix them, since it is more than 1 error

If 3 errors happened: same as case 1, but we can’t differentiate it from the case of a single error.

With the help of the additional parity bit, we can now simultaneously differentiate between 1 error (perform correction) and 2 errors (can detect, but do not perform correction).

Also, if the design decision is made that error correction is never attempted, the code can detect up to 3 errors, but this cannot be differentiated from the case of 1 error. This can be verified from the columns of : the sum of any three columns is non-zero (because of the top row of 1’s), so we know that the code can detect up to three errors.

5Cyclic codes¶

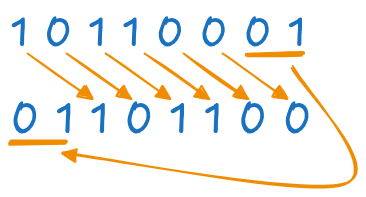

A “cyclic shift” means a circular (cyclic) rotation of a sequence of bits, in any direction, as illustrated below. Note that the ending bits are rotated to the start positions.

A cyclic shift of two positions to the right

Cyclic codes are a particular class of linear block codes, so all the theory up to now still applies: they have a generator matrix, parity check matrix etc. However, they can be implemented more efficient than general linear block codes (e.g. Hamming), using their special properties.

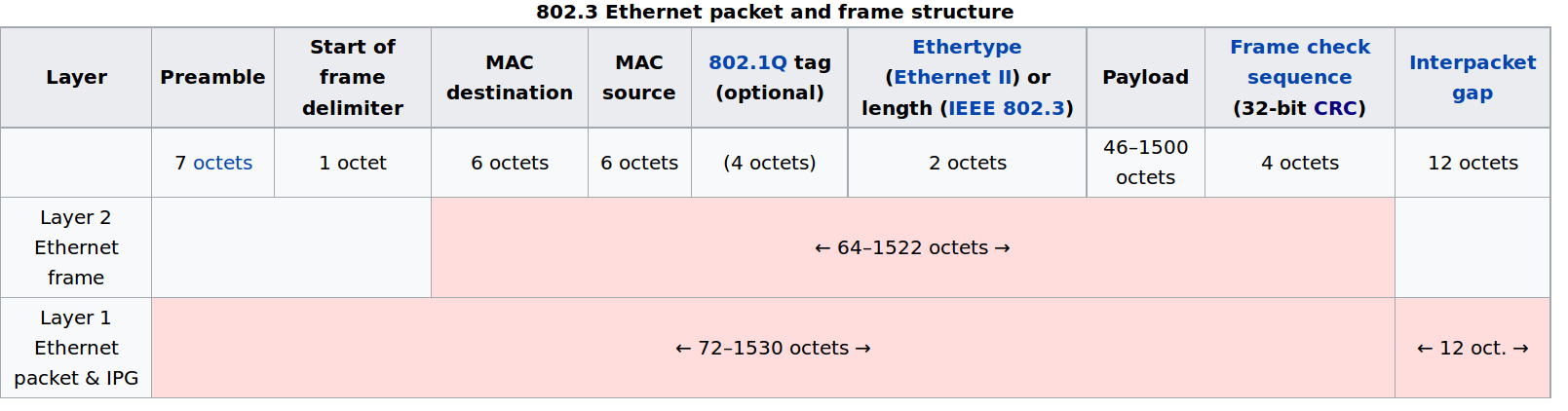

Cyclic codes are widely used in practice under the name CRC (Cyclic Redundancy Check), for example in network communications (Ethernet protocol), data storage (Flash memory) etc.

As an example, Ethernet frames (i.e., data packets on normal Internet connections) use CRC codes to check data integrity.

Structure of an Etherner frame, including a CRC field for error detection (“Frame check sequence (32-bit CRC)”)

5.1Binary polynomials¶

Working with cyclic codes relies heavily on binary polynomials.

Every binary sequence corresponds to a polynomial with binary coefficients:

for example:

From now on, when we refer to a “word” or “codeword” we also mean the corresponding polynomial.

We can perform all mathematical operations with these polynomials (addition, multiplication, division etc.), just like we do with normal polynomials, but considering the fact that we are in a binary world where . Cyclic coding relies on these operations with polynomials, and there exist efficient circuits for performing such operations (multiplications and divisions).

5.2Generator polynomial¶

No proof given.

This is fundamental property that codewords have, which is checked when performing error detection.

The generator polynomial must satisfy the following properties:

must have first and last coefficient equal to 1

must be a factor of

The degree of is , where:

n = the size of codeword (codeword polynomial has degree , which means bits)

k = the size of the information word (information polynomial has degree )

Since is the size of the codeword, and is the size of the information word, it follows that is the number of control bits added by the code:

The degree of is the number of control bits added by the code.

To give some examples of generator polynomials matching these properties, consider the following relation. We can write as a product of three polynomials (do the calculations and check this):

is the mentioned in the properties, so we have . Each of the three factors have first and last coefficient equal to 1. Therefore each factor can generate a different cyclic code, the degree of each factor being the number of control bits added by the code.

generates a (7,6) cyclic code (, degree=1 so there is 1 control bit, therefore information bits)

generates a (7,4) cyclic code (, degree=3, information bits)

generates another (7,4) cyclic code (, degree=3, information bits)

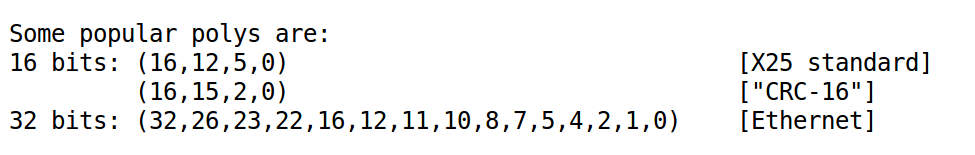

Several polynomials are standardized in various protocols:

Popular generator polynomials (image from *http://

Your turn: write the polynomials in mathematical form.

5.3Proving the cyclic property¶

Note that the proof relied on two properties mentioned before:

that a codeword is always a multiple of

that is a factor of

5.4Coding and decoding of cyclic codes¶

Cyclic codes can be used for detection or correction. In practice, they are used mostly for detection only (e.g. in Ethernet protocol), because there are other codes with better performance for correction.

Cyclic codes can be designed to be either systematic or non-systematic. but in practice the systematic variant is much preferred.

We will show the coding and decoding from 3 perspectives, all equivalent to each other:

“The mathematical way”, with polynomials

“The programming way”, as a programming algorithm

“The hardware way”, via circuit schematics

The mathematical way¶

First, a reminder on polynomial multiplication and division.

Two polynomials and can be multiplied, and their result has degree equal to the degree of + degree of .

A polynomials can be divided to another polynomial , producing a quotient and a remainder (which may be zero):

where:

is the the quotient (“câtul”);

is the remainder (“restul”);

the degree of is strictly smaller than the degree of .

Coding. We want to encode an information word with bits:

The non-systematic codeword generation relies simply on polynomial multiplication of with :

Note that the degrees in the equation match:

has degree ( bits)

has degree ( bits)

has degree ( bits)

However, this is non-systematic because we have no guarantee that the information bits are preserved in the codeword.

The systematic codeword generation is achieved using:

where is the remainder of dividing to :

In normal parlance, the remainder is also known as “the CRC value”.

Let us consider more closely the systematic codeword generation formula. First, is the resulting really a multiple of ? It is, because we have:

Second, why is the code systematic? Let’s analyze the systematic codeword generation step by step. We start from the information word:

Multiplying it with to obtain just shifts all bits to the right with positions:

Now, the remainder has degree strictly less than the degree of , which is , so it at most bits.

Therefore adding to to construct the codeword

means the two terms do not overlap, because has powers starting from upwards, whereas has powers strictly less than .

Alternatively, has zeros in front, whereas has at most bits, so they will not overlap when adding.

Therefore the code is systematic: the information bits are in the codeword, unchanged, in the positions corresponding to the higher powers of (towards the MSB), whereas the CRC value (i.e., ) occupies the first positions (towards the LSB).

We see now that a systematic cyclic codeword can be interpreted as computing a CRC value and appending it to the data. The CRC value represents the control bits added by the code.

Even though in our analysis and equations we always write it at the start of the codeword, in practice there exist different conventions for writing the codeword:

when writing the codewords from LSB to MSB (increasing order of degrees), the CRC appears in front (like here);

when writing the codewords from MSB to LSB (decreasing order of degrees), the CRC appears at the end (like in laboratory); it is same thing, just bit ordering is reversed

in practice, the CRC just needs to be appended somewhere; for example in an Ethernet frame there is a special field for it.

Decoding. Assume we receive a word

For error detection, we just want to see if is a codeword or not. For this, we check if the received still is a multiple of , by dividing to and checking the remainder:

if remainder of is 0, then it is a codeword, no errors present;

if remainder is non-zero, then it’s not a true codeword, errors detected.

Note that computing the remainder is the same to computing the CRC of the received data (including the CRC bits in the received word).

For error correction, ww need to use a syndrome table, just like with any linear block code, using the same general procedure:

build a table for all possible error words;

for each error code, divide by and compute the remainder

when the remainder is identical to the remainder obtained from our , we found the error word, so we can go on and correct the errors.

Example: at blackboard

The programming way¶

We show this method only for systematic codes, which is the mostly used variant.

A good reference for the procedure is found here: “A Painless Guide to CRC Error Detection Algorithms”*, Ross N. Williams

The key fact is that mathematical polynomial division algorithm is identical to XOR-ing the binary sequence succesively with :

align the binary sequence of under the leftmost 1

XOR the sequences

repeat

(just like in the lab)

See example at blackboard / lab.

In other words, we don’t have to think of binary polynomial division as a complicated mathematical operation. It is a simple, recursive bitwise XOR operation, easy to implement algorithmically in code. Polynomial division is the foundation of both (systematic) coding and decoding, so we just replace the mathematical computations with the equivalent binary algorithm.

Polynomial division = XORing succesively with

Coding. We have to perform the two steps:

Compute the CRC value , which is the remainder of divided to , using the binary algorithm above;

Put the CRC in front of the information word (mirrored, from LSB to MSB).

Decoding. We receive

Steps:

Mirror the sequence , so it is aligned MSB to LSB (CRC must be at the end)

For error detection:

compute the CRC of all sequence

if the remainder is 0 => no errors

if the remainder is non-zero => errors detected

Error correction:

use a syndrome table

build a table for all possible error words (same as with matrix codes)

for each error word, compute the CRC

when the resulting remainder is identical to the remainder obtained with , we found the error word => correct errors

The hardware way¶

There exist efficient circuits for polynomial multiplication and division, which can be used for systematic or non-systematic codeword generation.

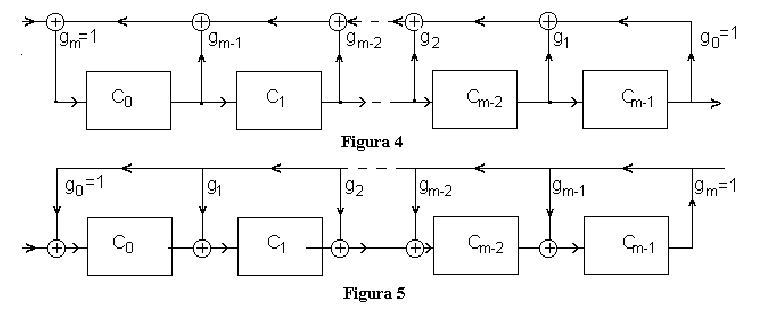

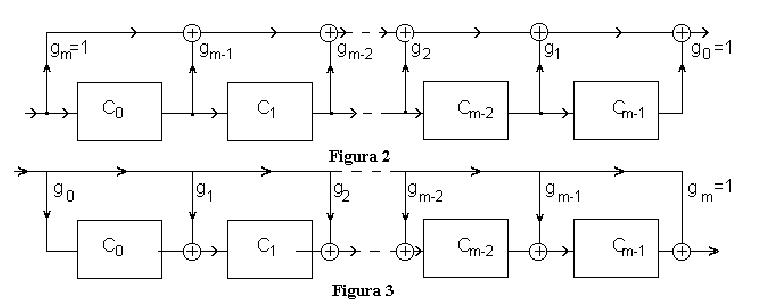

Two schematics for polynomial multiplication are presented below.

Circuits for polynomial multiplication

Operation of the multiplication circuits:

The circuits multiply an input polynomial with a polynomial defined by their structure

The input polynomial is applied at the input, 1 bit at a time, starting from highest degree

The output polynomial is obtained at the output, 1 bit at a time, starting from highest degree

Because output polynomial has larger degree, the circuit needs to operate a few more samples until the final result is obtained. During this time the input is 0.

Examples: at the whiteboard

Two schematics for division of binary polynomials are presented below.

Circuits for polynomial division

Operation of polynomial division circuits:

The circuits divide an input polynomial to a polynomial defined by their structure

The input polynomial is applied at the input, 1 bit at a time, starting from highest degree

The output polynomial is obtained at the output, 1 bit at a time, starting from highest degree

Because output polynomial has smaller degree, the circuit first outputs some zero values, until starting to output the result.

If the remainder is 0, all the cells remain with 0 at the end

Examples: at the whiteboard

A non-systematic cyclic encoder circuit relies simply on polynomial multiplication, without any other modifications, where the input is and the resulting output is the codeword .

Circuits for polynomial multiplication

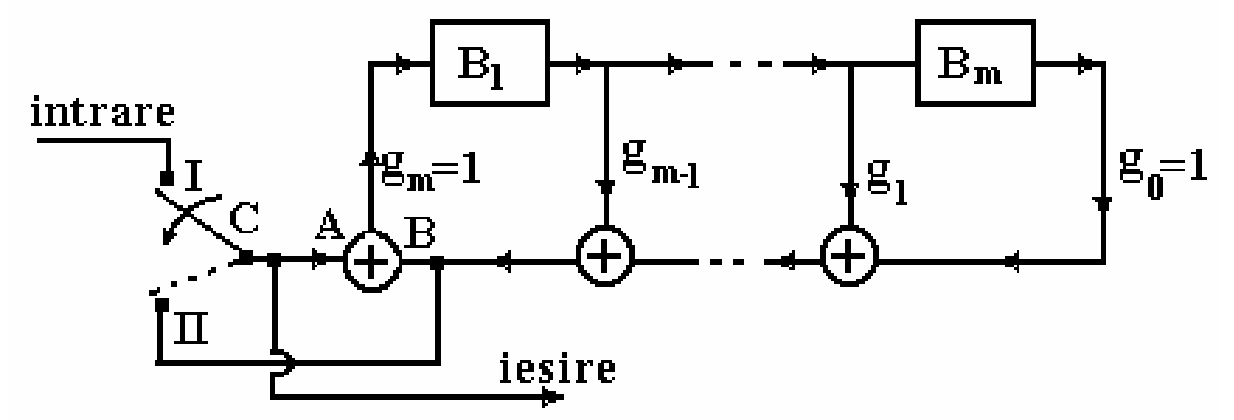

A systematic cyclic encoder circuit relies instead on a division circuit, which computes the CRC value which is appended:

Systematic cyclic encoder circuit

This schematic contains a division circuit (upper right part), and operates as follows:

Initially all cells are 0

First, the switch is in position I:

information bits are applied to the output and to the division circuit

first bits of the output are the information bits, so the codeword is indeed systematic

the input bits are also applied to the division circuit

Switch in position II:

some output bits are put at the output

the same output bits are also applied to the input of the division circuit

In the end all cells end up with value 0, because in phase II we add the input (A) with itself (B) at the input of the division circuit, so they cancel each other

Why is the output the desired codeword? Because:

has the information bits in the first part (systematic)

is a multiple of

Why is it a multiple of ? Because:

the output is always applied also to the input of the division circuit, in both phases of operation

after division, the cells end up in 0, which means there is no remainder of division

Side note: we haven’t really explained why the output is a codeword, we just showed that it is so, but this is enough.